2026

AI Cracks Long-Standing Open Problems in Mathematics

Wikimol, Public Domain

Google DeepMind's AlphaEvolve combines large language models with evolutionary search to push state-of-the-art results on longstanding unsolved problems in complexity theory and discover new mathematical structures. Building on prior AI-driven advances in mathematics, AlphaEvolve represents a leap in AI autonomously generating novel insights in pure mathematics, moving beyond assistance into genuine scientific discovery.

Google DeepMind — London, England

AI moved from mathematical assistance to genuine autonomous discovery of new mathematical knowledge.

2026

AI Surpasses Human Performance on Real-World Productivity Tasks

MrChrome, CC BY 3.0

OpenAI's GPT-5.4, with a 1-million-token context window, scores 75% on the OSWorld-V benchmark, which simulates everyday desktop tasks like file management, app navigation, and productivity workflows. This edges out the human baseline of 72.4%. The model matches or exceeds professional-level performance in many knowledge-work scenarios, signaling AI's transition from conversational tool to autonomous digital coworker capable of independent execution of complex multi-step tasks.

OpenAI — San Francisco, California

AI crossed the threshold from assistant to autonomous executor of real-world productivity work.

2025

AI Identifies Drug Candidates Validated in Lab Testing

Yerkin Krykbayev, CC BY-SA 4.0

Stanford Medicine researcher Gary Peltz uses Google's AI Co-Scientist to identify drug repurposing candidates for liver fibrosis. The AI suggests three drugs. Two of them reduce fibrosis and show signs of liver regeneration in lab tests, outperforming the human researcher's own selections. The results are published in Advanced Science.

Gary Peltz, Google AI Co-Scientist — Stanford Medicine, California

One of the first cases where AI-generated hypotheses led directly to validated experimental outcomes in medicine.

2025

AI Co-Discovers New Mathematical Proof

Jeremy Barande / Ecole polytechnique, CC BY-SA 3.0

UCLA mathematician Ernest Ryu co-discovers a new mathematical proof with the assistance of OpenAI's GPT-5 Pro. The proof establishes that a popular optimization method always converges on a single solution. It is a verified, published result where AI served as a genuine collaborator in original mathematical research.

Ernest Ryu, OpenAI GPT-5 Pro — UCLA, Los Angeles, California

One of the first verified cases of AI contributing to original mathematical discovery.

2025

AI Investment Hits $150 Billion

Wikimedia Commons, CC BY-SA 4.0

Private investment in AI reached an estimated $150 billion. Mega-rounds concentrated in foundation model labs, agentic platforms, and AI-native semiconductors. Analysts debated: bubble or early innings of a platform shift?

Venture capital and corporate investors globally — Worldwide, concentrated in US and China

AI became the dominant investment thesis across the technology sector.

2025

AI Agents Go Mainstream

US Department of the Air Force, Public Domain

AI moved from answering questions to taking actions. Agents that could browse the web, execute multi-step workflows, write and deploy code, and coordinate tasks became practical products. Enterprise adoption hit 78% of organizations.

OpenAI, Anthropic, Google DeepMind, Microsoft, and hundreds of startups — Worldwide

Shifted the AI value proposition from "assistant that answers" to "agent that executes."

2025

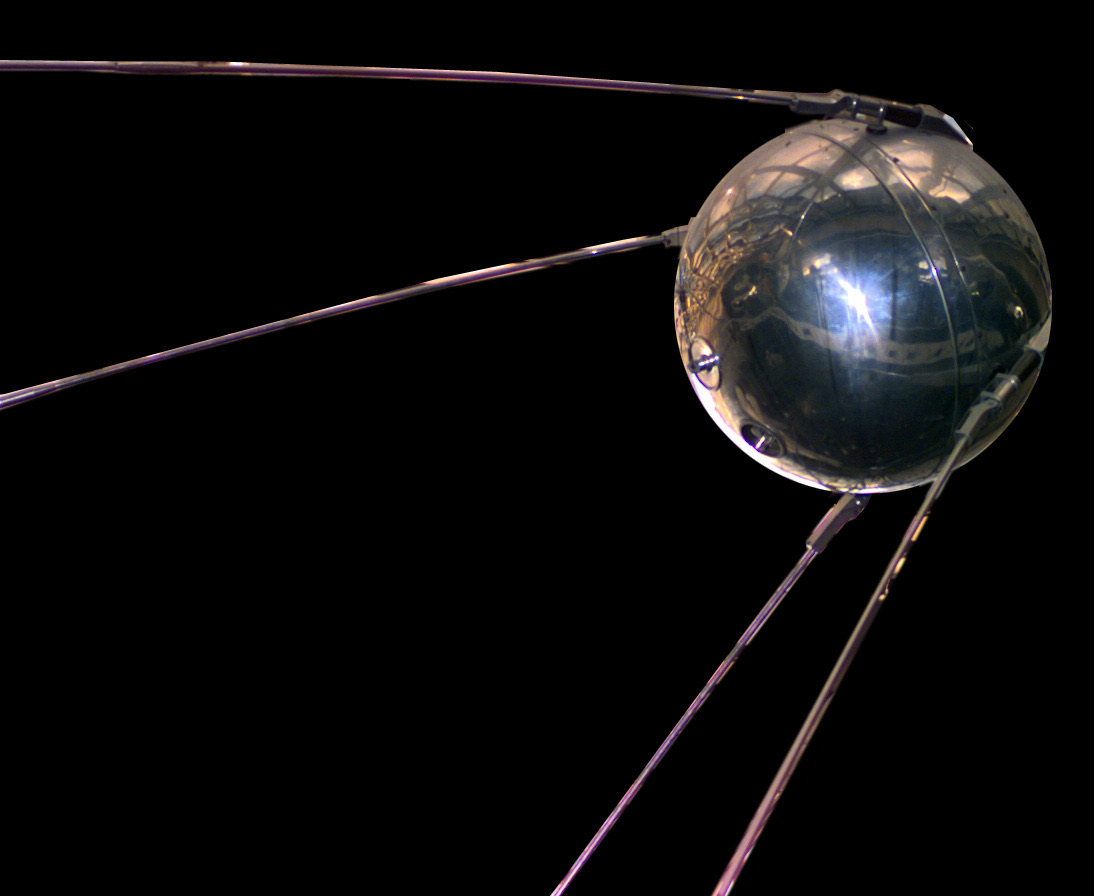

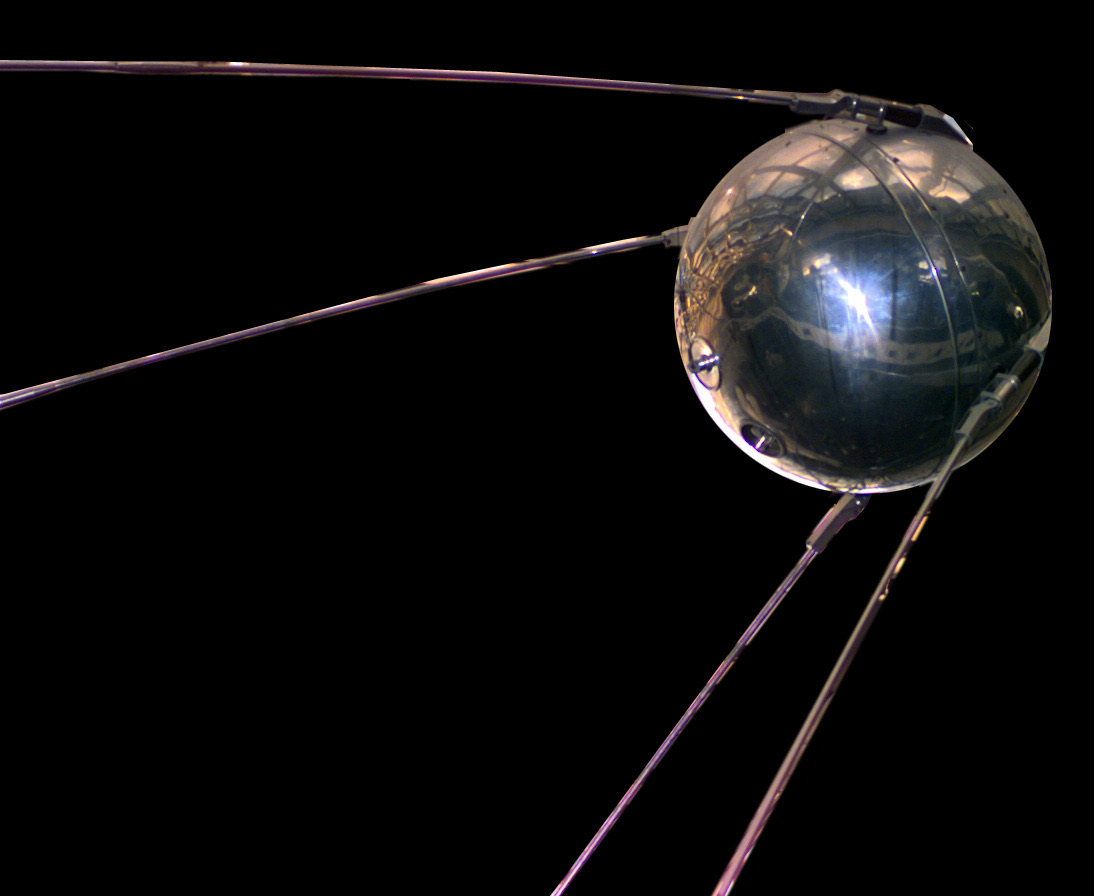

DeepSeek R1: AI's Sputnik Moment

NASA/NASM, Public Domain

Chinese startup DeepSeek released its R1 reasoning model under an open-source MIT license. It matched frontier models but was trained for ~$6 million, a fraction of American labs' budgets. It topped the US App Store within a week. Nvidia lost $593 billion in market value in a single day.

DeepSeek, founded by Liang Wenfeng — Hangzhou, China

Shattered the assumption that frontier AI required billion-dollar budgets. Marc Andreessen called it "AI's Sputnik moment."

2024

Anthropic Launches MCP: A Universal Standard for AI Integration

Wikimedia Commons, Public Domain

Anthropic released the Model Context Protocol, an open standard for connecting AI models to external tools, databases, and APIs. Described as "USB-C for AI," MCP solved the fragmented integration problem slowing enterprise AI adoption.

Anthropic — San Francisco, California

Created a vendor-neutral standard that accelerated the shift from chatbots to AI agents that take action.

2024

EU AI Act Takes Effect

Wikimedia Commons, CC BY-SA 3.0

The European Union's AI Act, the world's first comprehensive AI regulation, entered into force. It classified AI systems by risk level and imposed requirements for transparency, human oversight, and accountability.

European Parliament and Council — European Union

Set the global benchmark for AI regulation. Forced companies worldwide to consider governance as a core requirement.

2024

Sora: AI Generates Photorealistic Video

OpenAI, Fair Use

OpenAI previewed Sora, a text-to-video model that produced cinematic-quality clips. The demos were startling in their realism, showing complex scenes with consistent physics and camera movement.

OpenAI — San Francisco, California

Signaled generative AI was moving beyond still images into video, with massive implications for media and entertainment.

2024

Nobel Prizes Awarded for AI Foundations

Wikimedia Commons, CC BY 2.0

The Nobel Committee awarded the Physics Prize to Hopfield and Hinton for neural network foundations, and the Chemistry Prize to Hassabis, Jumper, and Baker for computational protein prediction. AI was recognized at the highest level of scientific achievement.

John Hopfield, Geoffrey Hinton (Physics); Demis Hassabis, John Jumper, David Baker (Chemistry) — Royal Swedish Academy of Sciences, Stockholm

Formally recognized AI as a transformative scientific tool, not just a technology product.

2023

Claude Enters the Arena

TechCrunch, CC BY 2.0

Anthropic released Claude, positioning it as a safer, more steerable alternative. Founded by former OpenAI researchers, Anthropic emphasized Constitutional AI and alignment research. Claude quickly became the model of choice for users who valued thoughtfulness and nuance.

Anthropic, founded by Dario and Daniela Amodei — San Francisco, California

Proved the market could support multiple frontier model providers with distinct approaches to safety.

2023

GPT-4: Multimodal and Measurably Smarter

Photo by James Tamim, Wikimedia Commons, CC BY 2.0

OpenAI launched GPT-4, which processed both text and images. It passed the bar exam in the 90th percentile. The gap between AI and human performance on standardized tests had effectively closed.

OpenAI — San Francisco, California

Established that large language models could perform expert-level reasoning across diverse domains.

2022

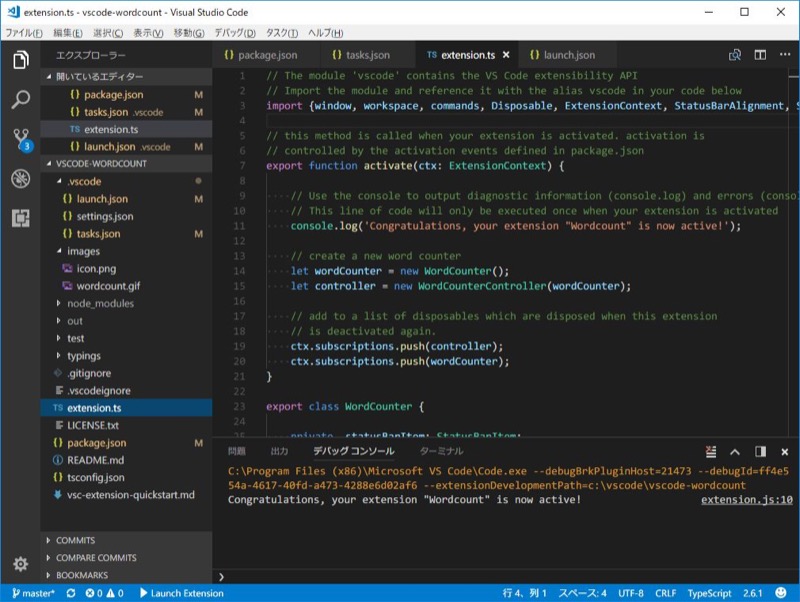

First AI-Integrated Product

digital2DNA

Digital2DNA ships its first product with AI built into the core architecture, not as an add-on but as a fundamental component of how the system operates. This sets the direction for DocSimplify and the company's broader AI strategy.

Digital2DNA — Ohio, United States

AI moved from a feature to a foundation in Digital2DNA's product architecture.

2022

ChatGPT: The Fastest-Growing Product in History

James Tamim, CC BY 2.0

OpenAI released ChatGPT, reaching 100 million users in two months - the fastest adoption of any consumer product ever. It made "AI" a kitchen-table word.

OpenAI — San Francisco, California

The inflection point. AI went from a tech industry topic to a society-wide conversation.

2022

Stable Diffusion Goes Open Source

Stability AI, CC BY-SA 4.0

Stability AI released Stable Diffusion as open source. Unlike DALL-E, anyone could download it, run it locally, and modify it. An explosion of community tools followed within weeks.

Stability AI, CompVis Group, Runway ML — London / Munich / New York

Democratized AI image generation. Proved open-source could accelerate AI adoption faster than any corporate launch.

2022

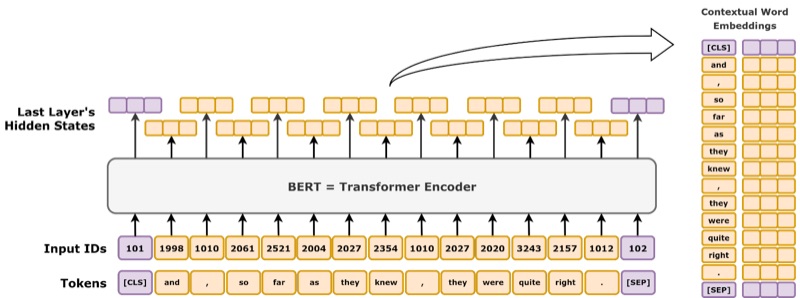

GitHub Copilot Ships to Developers

Wikimedia Commons, CC0

GitHub launched Copilot, an AI pair programmer that autocompleted code in real time. Developers debated whether it was a productivity multiplier or a crutch. Adoption was massive either way.

GitHub (Microsoft) / OpenAI — Worldwide release

First widely adopted AI coding assistant. Changed the daily workflow of millions of developers.

2021

DALL-E: Text Becomes Images

OpenAI, Fair Use

OpenAI released DALL-E, a model that generated images from text descriptions. Type "an armchair shaped like an avocado" and you got one. It was novel and slightly unnerving.

OpenAI — San Francisco, California

Opened the floodgates for AI-generated visual content. Ignited debates about art, copyright, and creative work that are still unresolved.

2020

First Production Machine Learning Deployment

digital2DNA

Digital2DNA deploys its first production machine learning system, increasing customer throughput by over 300%. This marks the company's transition from traditional software development into AI-enabled solutions with measurable operational impact.

Digital2DNA — Ohio, United States

Proved that AI could deliver measurable operational impact, not just demos.

2020

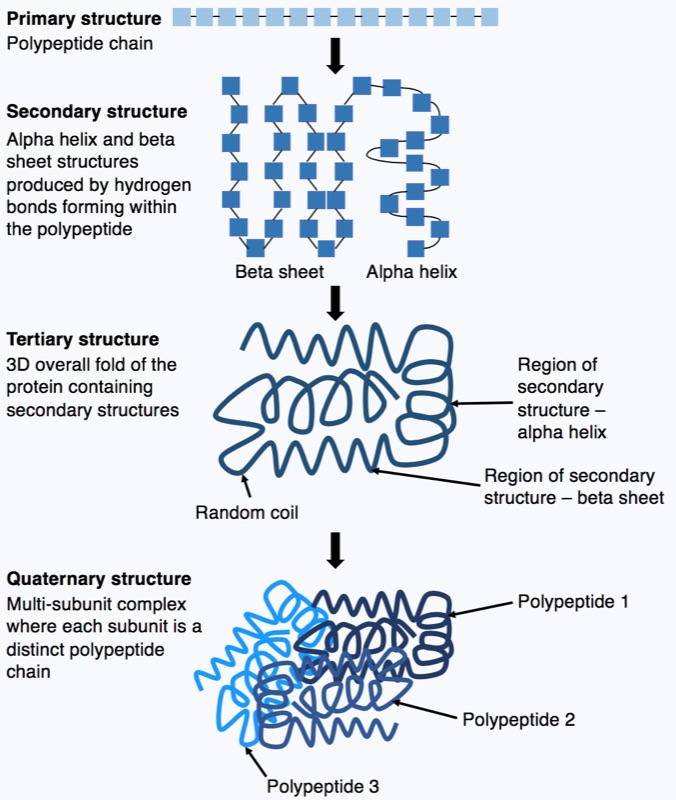

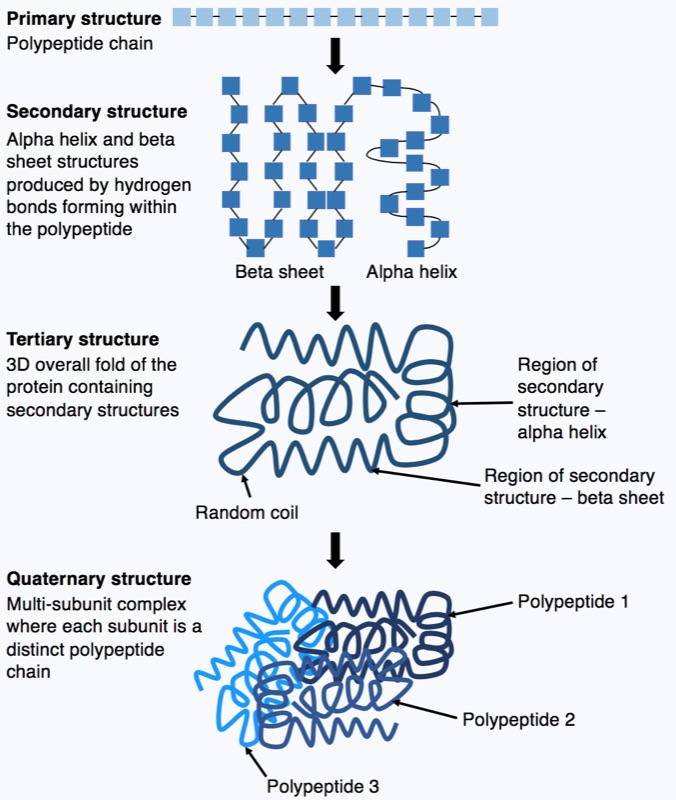

AlphaFold Solves Protein Folding

Wikimedia Commons, CC BY 4.0

DeepMind's AlphaFold predicted the 3D structure of proteins with accuracy comparable to experimental methods, effectively solving a 50-year grand challenge in biology. They later released predicted structures for nearly every known protein.

DeepMind team led by John Jumper and Demis Hassabis — DeepMind, London, England

Won the 2024 Nobel Prize in Chemistry. Compressed decades of structural biology work into minutes.

2020

GPT-3: Scale Changes Everything

TechCrunch, CC BY 2.0

OpenAI released GPT-3 with 175 billion parameters, 100x larger than GPT-2. It could write essays, code, and poetry from a simple prompt. Training cost ~$4.6 million. It was sometimes confidently wrong, but the raw capability was undeniable.

OpenAI (Tom Brown and 30 researchers) — San Francisco, California

Demonstrated that scaling language models produced emergent capabilities nobody had explicitly programmed.

2019

OpenAI Five Beats Dota 2 World Champions

Valve Corporation, Fair Use

OpenAI's team of five neural networks defeated OG, the reigning Dota 2 world champions. Dota 2 requires real-time strategy, teamwork, and long-horizon planning across 170+ heroes.

OpenAI; OG (world champion team) — San Francisco, California

AI conquered a complex real-time strategy game requiring teamwork and adaptation under uncertainty.

2018

First Integration Hub Built

digital2DNA

Digital2DNA builds its first integration hub, connecting disparate healthcare systems and laying the groundwork for what would become the company's core integration architecture. The hub handles data exchange across APIs, databases, and file-based transfers at enterprise scale.

Digital2DNA — Ohio, United States

The technical foundation that would evolve into DocSimplify's Integration Hub.

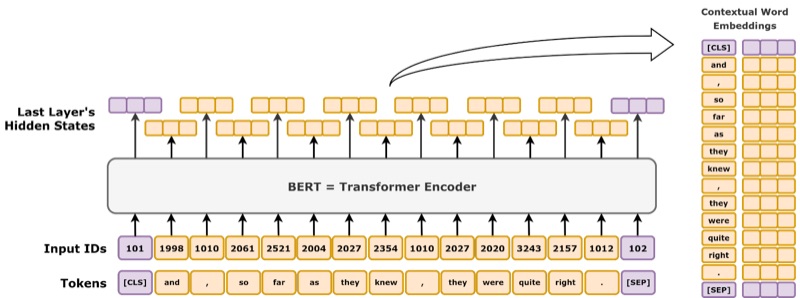

2018

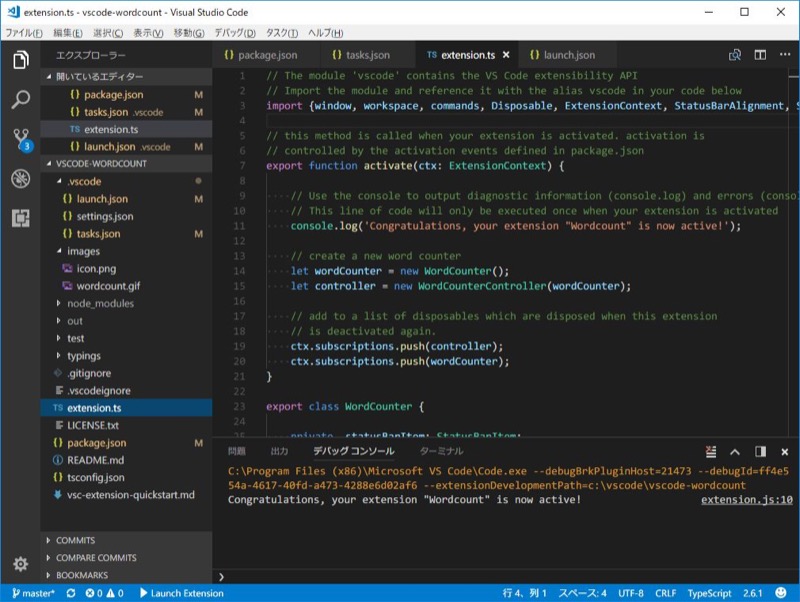

BERT Understands Context

Wikimedia Commons, CC BY-SA 4.0

Google released BERT, a bidirectional language model that reads forward and backward simultaneously. It dominated every NLP benchmark it touched and transformed Google Search overnight.

Jacob Devlin et al. — Google AI Language

Established the pre-train-then-fine-tune paradigm that powers modern AI.

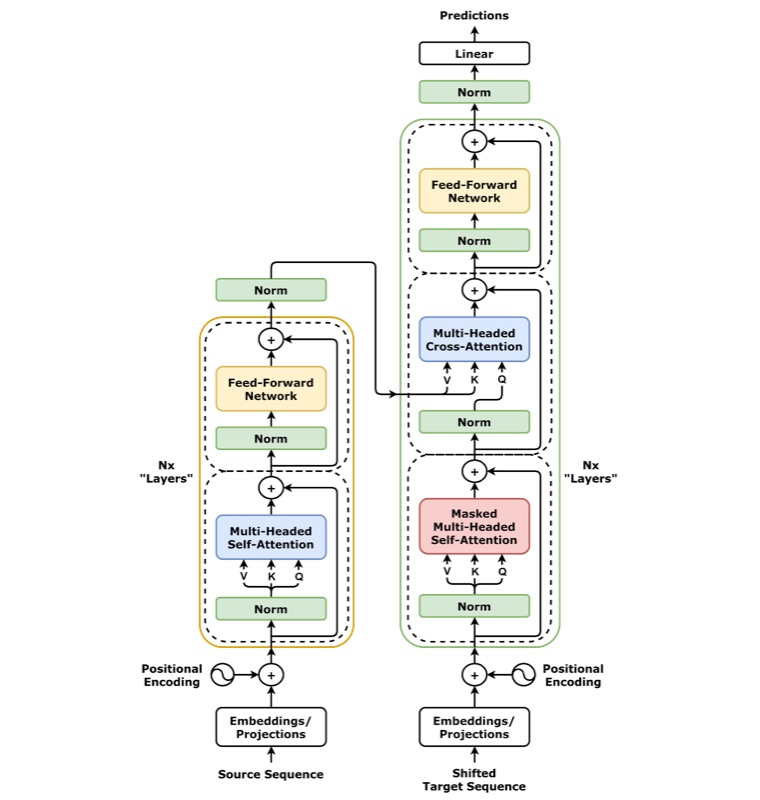

2017

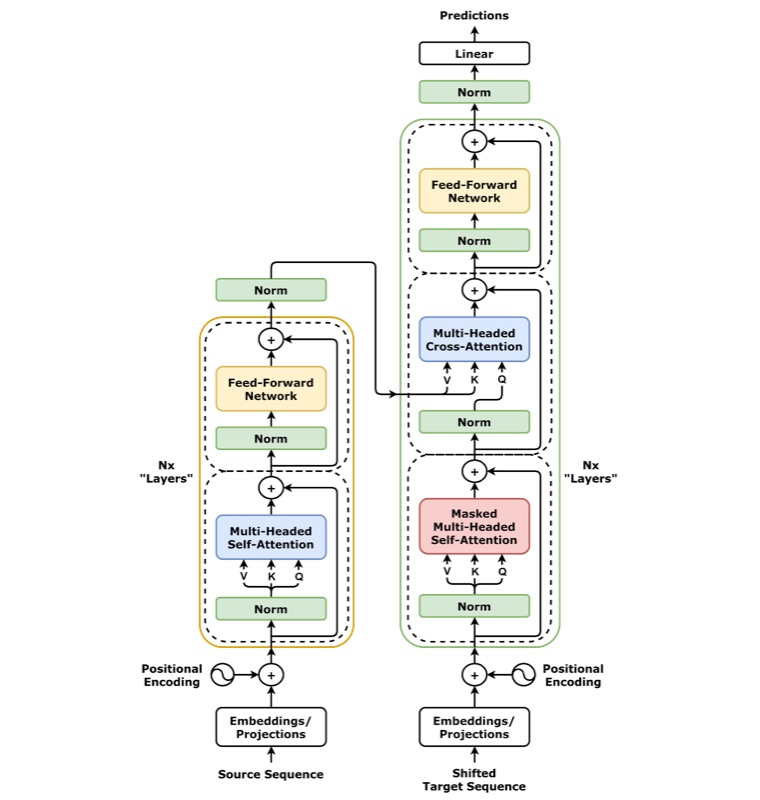

"Attention Is All You Need": The Transformer

Wikimedia Commons, CC BY 4.0

Eight researchers at Google published a paper introducing the Transformer architecture, replacing recurrence with self-attention. The paper's title was cheeky. Its impact was seismic. Every major AI model today - GPT, Claude, Gemini, LLaMA - is built on Transformers.

Ashish Vaswani, Noam Shazeer, Niki Parmar, and five others — Google Brain / Google Research

The single most consequential AI architecture paper in history.

2017

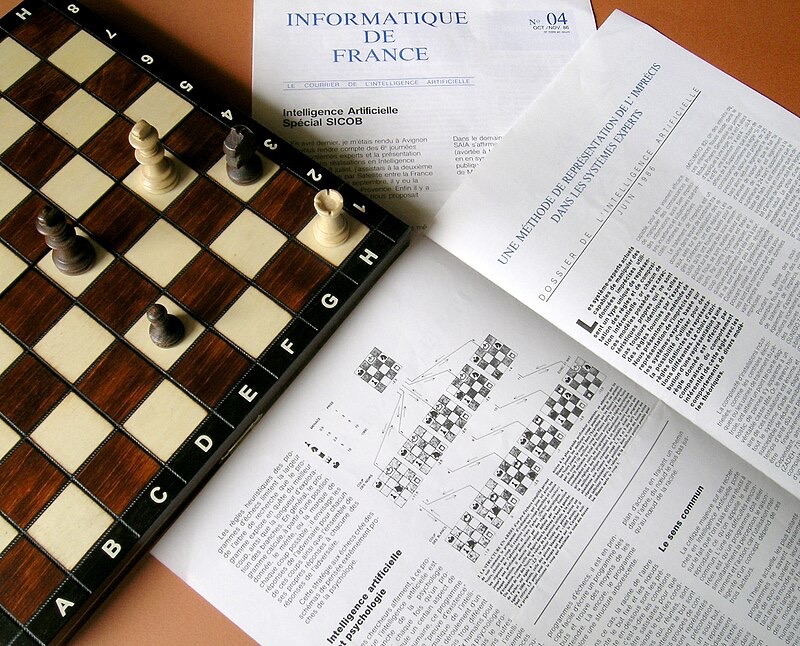

AlphaZero Masters Three Games in Hours

Wikimedia Commons (Courrier), CC0

DeepMind's AlphaZero taught itself chess, shogi, and Go from scratch, with no human game data. Within 24 hours it was playing at superhuman level in all three. Its style was described as "alien" and "beautiful" by grandmasters.

DeepMind team led by David Silver — DeepMind, London, England

Showed that general-purpose learning, starting from zero knowledge, could surpass decades of human-engineered AI in hours.

2016

First Care Management System Delivered

digital2DNA

Digital2DNA delivers its first custom care management system, establishing the company's foundation in healthcare product development and clinical workflow automation.

Digital2DNA — Ohio, United States

Proved the delivery model in healthcare - working software, shipped weekly.

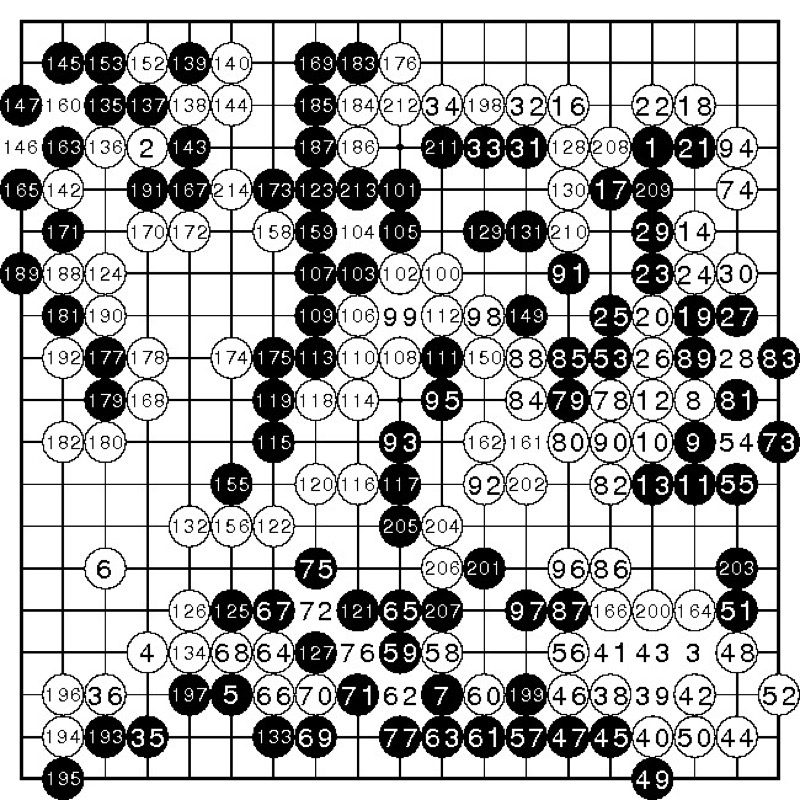

2016

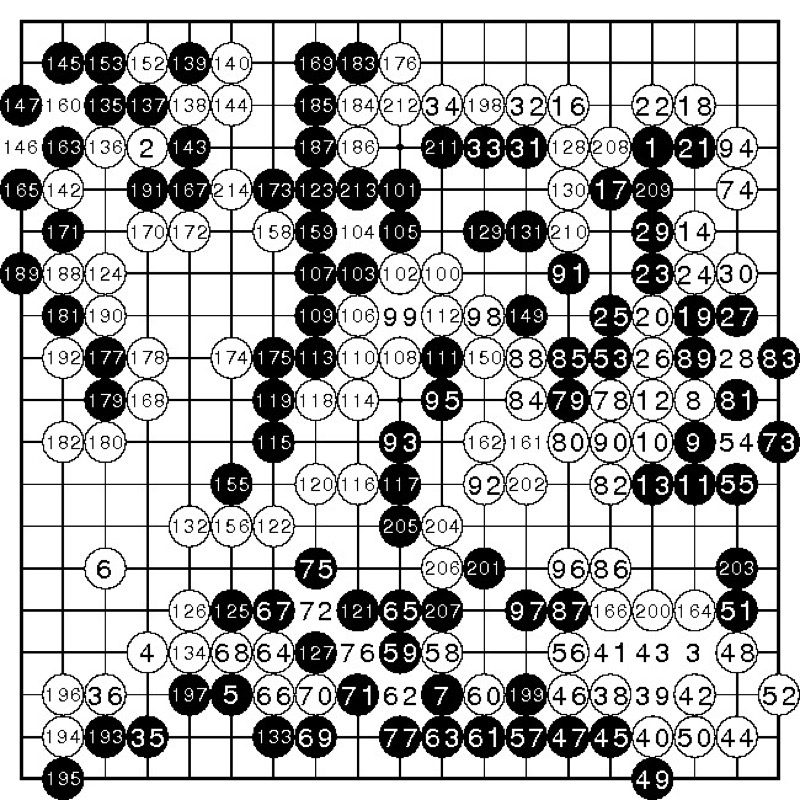

AlphaGo Defeats Lee Sedol

Wikimedia Commons, CC BY 2.0

DeepMind's AlphaGo beat Lee Sedol, one of the greatest Go players in history, four games to one. Go has more possible board positions than atoms in the observable universe. Move 37 of Game 2 was so creative that commentators called it "beautiful" and "not a human move."

DeepMind team led by David Silver and Demis Hassabis; Lee Sedol — Four Seasons Hotel, Seoul, South Korea

Demonstrated that machines could develop creative strategies beyond human intuition.

2014

Digital2DNA Founded

digital2DNA

Digital2DNA is founded with a simple thesis: healthcare technology should work for the people using it, not the other way around. The company is sketched out over lunch, born from frustration with the state of healthcare integration and a belief that software delivery could be radically better.

Michael Martin, Johan Botha — Ohio, United States

The beginning of a company built around weekly delivery and healthcare integration at scale.

2014

Generative Adversarial Networks Are Born

Wikimedia Commons, CC BY 2.0

Ian Goodfellow introduced GANs - two neural networks compete: a generator creates fake data, a discriminator tries to detect the fakes. The idea allegedly came to him during a bar argument.

Ian Goodfellow et al. — Université de Montréal, Canada

Launched the era of AI-generated images, video, and synthetic data. Yann LeCun called GANs "the most interesting idea in the last ten years in machine learning."

2013

DeepMind Teaches Itself Atari

Wikimedia Commons, CC BY-SA 3.0

A small London startup called DeepMind showed a deep reinforcement learning agent that learned to play Atari 2600 games from raw pixel input with no prior rules. Google acquired DeepMind two months later for over $500 million.

Volodymyr Mnih, David Silver, and the DeepMind team — DeepMind, London, England

Combined deep learning with reinforcement learning for the first time at scale.

2012

AlexNet Ignites the Deep Learning Era

Wikimedia Commons, CC BY-SA 4.0

Krizhevsky, Sutskever, and Hinton entered a deep convolutional neural network in the ImageNet competition. It crushed the field, cutting the error rate nearly in half. The secret: training on GPUs. Computer vision would never be the same.

Alex Krizhevsky, Ilya Sutskever, Geoffrey Hinton — University of Toronto

Triggered an avalanche of deep learning research. Within two years, every top ImageNet entry used deep neural networks.

2011

Watson Wins Jeopardy!

Wikimedia Commons, Fair Use

IBM's Watson defeated Ken Jennings and Brad Rutter. Jennings wrote on his screen: "I for one welcome our new computer overlords."

IBM Research; Ken Jennings, Brad Rutter — Sony Pictures Studios, Culver City, California

Showed AI could handle ambiguous, pun-laden natural language, not just the formal rules of a board game.

1997

Deep Blue Defeats Kasparov

Wikimedia Commons, CC BY 2.0

IBM's Deep Blue beat reigning world chess champion Garry Kasparov 3.5 to 2.5. Deep Blue evaluated 200 million positions per second using brute-force search. Kasparov accused IBM of cheating, IBM dismantled the machine, and the result stood.

IBM team led by Feng-hsiung Hsu; Garry Kasparov — New York City

First time a computer beat a reigning world chess champion under tournament conditions.

1992

TD-Gammon: Self-Taught Backgammon

Wikimedia Commons, CC BY-SA 3.0

Gerald Tesauro at IBM built a neural network that learned backgammon by playing 1.5 million games against itself. It reached expert level, discovering strategies that surprised human professionals. This was reinforcement learning before we called it that.

Gerald Tesauro — IBM Thomas J. Watson Research Center

First demonstration that self-play could produce superhuman game strategy.

1986

Backpropagation Makes Neural Networks Trainable

Wikimedia Commons, CC BY 4.0

Rumelhart, Hinton, and Williams published a method for training multi-layer neural networks by propagating errors backward. The math had existed before, but this paper showed it worked in practice. It would take decades of hardware improvement before the full impact was felt.

David Rumelhart, Geoffrey Hinton, Ronald Williams — UC San Diego / Carnegie Mellon University

Provided the core training algorithm still used in deep learning today. Hinton would win the 2024 Nobel Prize partly for this work.

1980

Expert Systems Go Commercial

Wikimedia Commons, CC BY-SA 3.0

R1/XCON, developed at Carnegie Mellon for Digital Equipment Corporation, began configuring computer orders. It saved DEC an estimated $40 million per year. This kicked off a boom in rule-based expert systems.

John McDermott — Carnegie Mellon University / Digital Equipment Corporation

AI generated real business value for the first time.

1970–1980

The First AI Winter

Wikimedia Commons, Public Domain

Government funding agencies lost patience. The 1973 Lighthill Report in the UK concluded that AI had failed to deliver on its promises. DARPA slashed budgets. Researchers scattered to other fields or worked in obscurity.

Sir James Lighthill — United Kingdom and United States

Established a recurring pattern in AI: overpromise, underdeliver, lose funding, repeat.

1969

Perceptrons: The Book That Froze a Field

Wikimedia Commons, CC BY 2.0

Minsky and Papert published "Perceptrons," mathematically proving that single-layer perceptrons could not solve important classes of problems. The book's influence went beyond its claims, effectively killing neural network funding for over a decade.

Marvin Minsky, Seymour Papert — MIT, Cambridge, Massachusetts

Triggered the first "AI Winter." Neural network research nearly disappeared from academia until the mid-1980s.

1966

ELIZA: The First Chatbot

Wikimedia Commons, CC BY-SA 4.0

Joseph Weizenbaum created ELIZA, a program that mimicked a Rogerian psychotherapist by rephrasing users' statements as questions. Weizenbaum was disturbed when people formed emotional attachments to it, spending hours confiding in what amounted to a parlor trick.

Joseph Weizenbaum — MIT, Cambridge, Massachusetts

Demonstrated that humans readily anthropomorphize machines.

1957

The Perceptron: Hardware That Learns

Wikimedia Commons, Public Domain

Frank Rosenblatt built the Mark I Perceptron at Cornell, a machine that could learn to recognize simple patterns. The New York Times reported it would eventually "walk, talk, see, write, reproduce itself and be conscious of its existence." The hype cycle had begun.

Frank Rosenblatt — Cornell Aeronautical Laboratory, Buffalo, New York

First implementation of a machine that learned from data rather than explicit programming. Also the first AI to be wildly overhyped by the press.

1956

The Dartmouth Workshop: AI Gets Its Name

Wikimedia Commons, CC BY-SA 3.0

John McCarthy organized a two-month summer workshop at Dartmouth College. He coined the term "artificial intelligence" in the funding proposal. The attendees mapped out the field's research agenda. They believed most problems of AI would be substantially solved within a generation. They were wrong by about five generations.

John McCarthy, Marvin Minsky, Claude Shannon, Herbert Simon, Allen Newell — Dartmouth College, Hanover, New Hampshire

Formally established AI as an academic discipline.

1950

Turing Asks: Can Machines Think?

Wikimedia Commons, Public Domain

Alan Turing published "Computing Machinery and Intelligence," proposing what became known as the Turing Test. Rather than defining intelligence, he reframed the question: if a machine can fool a human into thinking it is human, does the distinction matter?

Alan Turing — University of Manchester, England

Gave the field its central philosophical question and a practical benchmark that would drive research for decades.

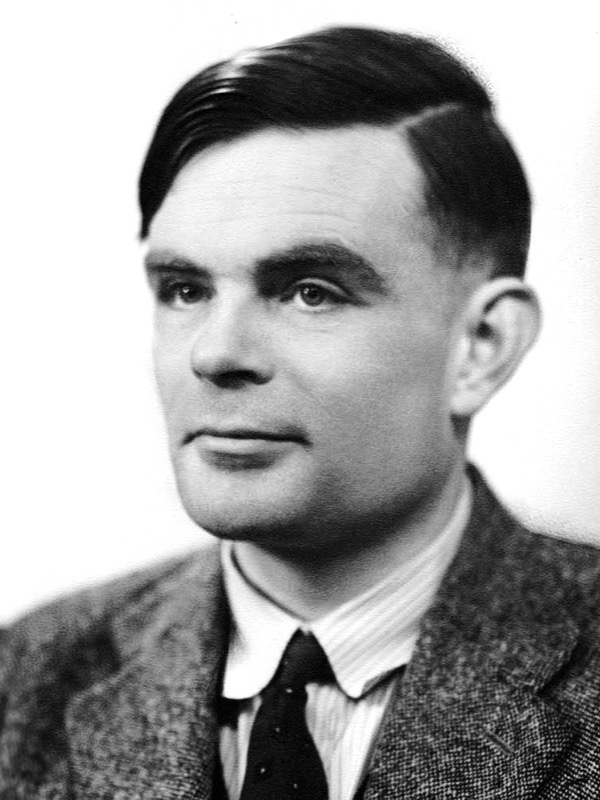

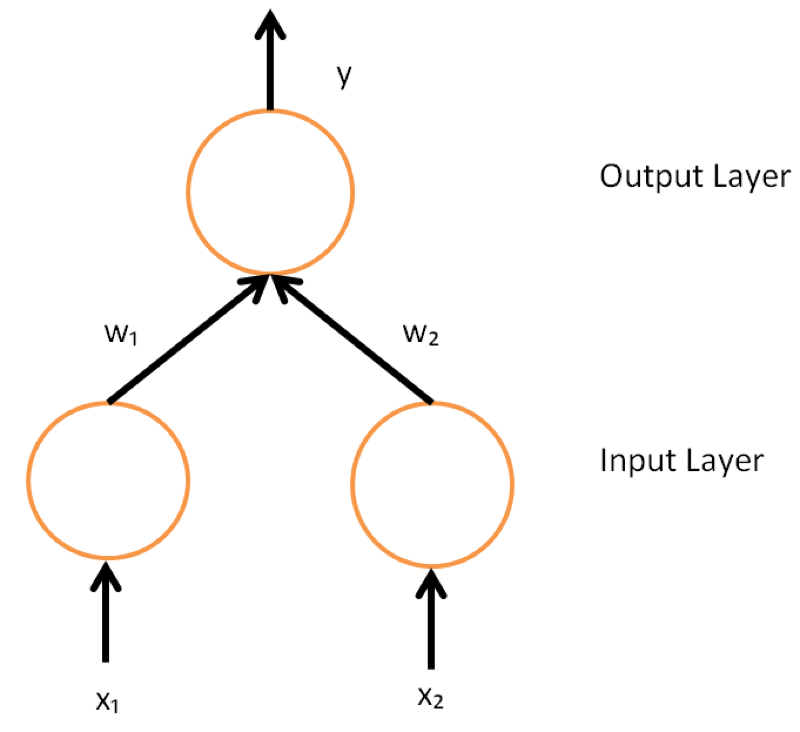

1943

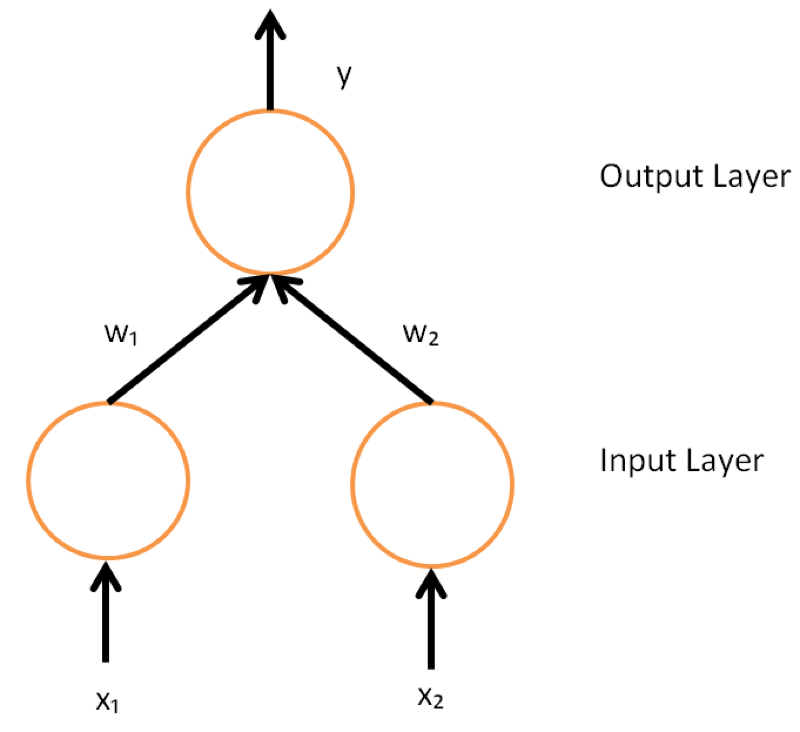

The First Artificial Neuron

Wikimedia Commons, CC BY-SA 3.0

Warren McCulloch and Walter Pitts published "A Logical Calculus of the Ideas Immanent in Nervous Activity," the first mathematical model of a neural network. They proved that simple connected units could, in principle, compute anything.

Warren McCulloch, Walter Pitts — University of Chicago

Established that networks of simple elements could perform computation, laying the theoretical foundation for all neural networks to come.